It’s been almost 2 years since I last posted to this blog. Since then, related to the world of software development, two things have changed:

- I’ve been working at a large game company as a Senior Software Engineer on one of the technology teams; it’s nothing short of a total blast working with the people on my team.

- The dawn of agentic coding has arrived on the software scene, and knowledge work in general.

It’s terrifying and exciting times to be a software developer. I hope to document each step along the way.

(Skip to the bottom if you just want the ‘fun stuff’ 😀)

I started using coding assistants only recently. In November 2025, a coworker demonstrated GitHub Copilot in Visual Studio Code to team members. He was amazed at how quickly he could get working prototypes up and running. At first, I found it interesting, but as his demonstration continued, I too was amazed.

Over November and December, the team independently started experimenting with coding assistants to see what they could do, what mistakes they would make, and what best practices we could devise for using them in production code.

In December, we shipped our first AI-assisted project, weeks ahead of schedule and with better documentation than in many prior projects.

By January and February 2026, we were routinely using AI to explore and document older codebases, as well as to assist with maintenance and modernization efforts. We even began investigating ways to use AI for reducing accumulated technical debt…ironically, since one common complaint about AI agents is that they add to a codebase’s technical debt.

As of March 1, 2026, all team members use coding AI as an efficiency tool at work on a daily basis. All of us are also pursuing side projects after hours, experimenting with AI coding agents to push their limits, identify where they fail, and determine where they add value. Several of us have created functional web apps that solve problems we had long intended to address through traditional development but never found the time for. And all of us are working on passion projects—mostly game-related—that would traditionally require several programmers and artists weeks to prototype, but which can now be prototyped in several nights by one software engineer.

In the coming weeks, I will present some of these passion projects. I will also provide posts on the best ways to fully and deeply understand—or “grok”—Large Language Models and agentic AI, so that you do not have to treat them as black boxes and can more fully grasp their problems and usefulness in software development.

Additionally, I will blog about the societal implications of the rapid rise of AI in general, with thoughts on how to not only survive but thrive, and what to teach your children to do the same.

For now, I will present a one-off project that I created on a whim last night. A coworker was investigating realistic gem rendering on screen. He considered existing game engines, including Godot and Unity, to give him a head start. He used AI to assist, but he was not getting the results he wanted in Godot, so he switched to Unity. He reported decent results—this was Friday, two days ago—but I suggested he look into web-based technologies, as they would provide cross-platform availability out of the box.

That night, I wondered how easy it would be to create a web-based gem renderer. While half-watching TV with my wife and daughter, I decided to have Claude Opus and Claude Sonnet help me develop one. I wrote a detailed software specification, omitting the rendering technologies so that the AI could recommend them. I then had Claude Opus create the plan and Claude Sonnet execute it.

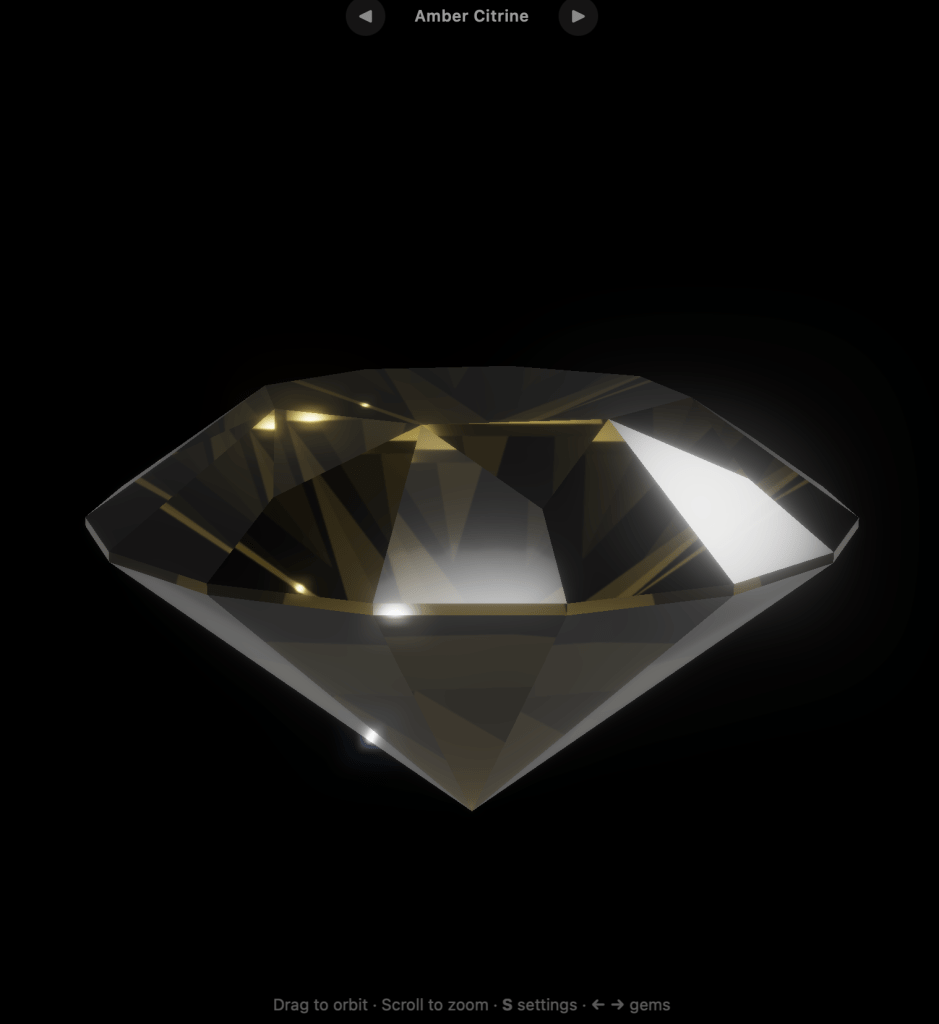

The result is a web app, using three.js, that renders gemstones in real time:

The live website has the gem autorotating in real time, and you can use the mouse and mouse button to see different orientations of the gem. You can also switch gems (Diamond, Sapphire, Emerald, Citrine) and change some of the render parameters using on screen sliders.

It’s important to note; without human intervention, the concept of “aesthetics” often alludes the current LLMs, especially in a coding context. They are getting better at it, but often developers will have to coach the LLM, “move it more to the left so that it is inline with the text above”, or “the spaceship looks like a rectangle, it was supposed to be a chevron”, or even “that gem looks like *ss, lets brainstorm ways to fix it.”

This is where I believe that humans will always be needed. We may get to a point where AI can render a movie for a consumer ‘on the fly’, but will it be good? Coherent? Watchable for more than 5 minutes?

This came into focus when I tried to work with an LLM to convert the web based gem renderer to Vulkan, to run natively on MacOS and Windows. The first attempt looked like *ss. And, after several hours of re-prompting and coaching, it still looked like *ss. However, I have a plan on how to get the LLM agent to convert the renderer to Vulkan in a way that exactly replicates the WebGL renderer. Stay tuned.

If you are interested in trying out and seeing the web version of the gem renderer, try out the live website here : https://rgmarquez.github.io/gem-render/

The GitHub repo is here : https://github.com/rgmarquez/gem-render